👁️ The New Panopticon - Corporate Surveillance, Hacker Tradecraft, and the AI Data Gold Rush

Plus: The Robot Just Served an Ace, And It’s a Bigger Deal Than You Think

I recently came across a chilling piece in Wired about how MSG is harvesting “troves” of video, emotional, and behavioral data from every visitor that lands in their properties. It triggered a realization: every camera in the world is no longer just “watching” us, it’s indexing us. I did some digging for this edition, and the reality is deeper than Orwellian. Our data is being monetized in real-time, often without our consent or even our awareness. Additionally, we’ve reached the “Deep Blue” stage for robotics. Machines can beat us in chess and table tennis, what’s next? Let’s dive in. As always: stay curious, but stay alert.

The New Panopticon - Corporate Surveillance, Hacker Tradecraft, and the AI Data Gold Rush

The Orwellian Parallel. Surveillance Capitalism in the Workplace

The Hacker Strategy

The Broader Trend

The Value of Behavioral Data for AI

🧰 AI Tools - Fraud and Surveillance. Tools to be aware of or used responsibly

The Robot Just Served an Ace, And It’s a Bigger Deal Than You Think

Why Table Tennis? Why Does This Matter?

Where will we be in 5 years

The Real Takeaway for You

📚Learning Corner - Data Privacy, Ethics, and Responsible AI Specialization

📰 AI News and Trends

TSMC Snubs ASML's $410M Chipmaking Machine as "Too Expensive" TSMC announced it will delay adopting ASML's cutting-edge high-NA EUV lithography machines until at least 2029, with co-COO Kevin Zhang calling them "very, very expensive" at roughly $410 million per unit. The rebuff sent ASML's stock lower and signals that even the world's most advanced chipmaker has limits on how fast it will absorb next-generation tooling costs.

Google Unveils Two New AI Chips to Challenge Nvidia, its eighth-generation Tensor Processing Units, the TPU 8t (for training) and TPU 8i (for inference), at Google Cloud Next 2026, explicitly designed for the “agentic era” of AI.

Microsoft Drops $18 Billion on Australian AI Infrastructure, covering Azure AI supercomputing, cloud expansion, and national cybersecurity.

Tencent and Alibaba are in talks to invest in DeepSeek, valuing the company at over $20 billion.

Google builds elite team to close the coding gap with Anthropic

Other Tech News

Elon Musk announced the Optimus V3 robot unveil has been pushed to late July or early August to prevent rivals from copying the design before mass production begins.

Tesla reported a strong Q1 2026 earnings beat, with revenue up 16%, global energy crisis has been part of this increase. Now the company is tripling its spending to $25B as it pivots hard into AI and robotics, funding everything from custom chips to robotaxis and humanoid robots.

Kalshi prediction site suspends three political candidates for betting on their own races

Crypto scam lures ships into the Strait of Hormuz, falsely promising safe passage

The New Panopticon

Corporate Surveillance, Hacker Tradecraft, and the AI Data Gold Rush

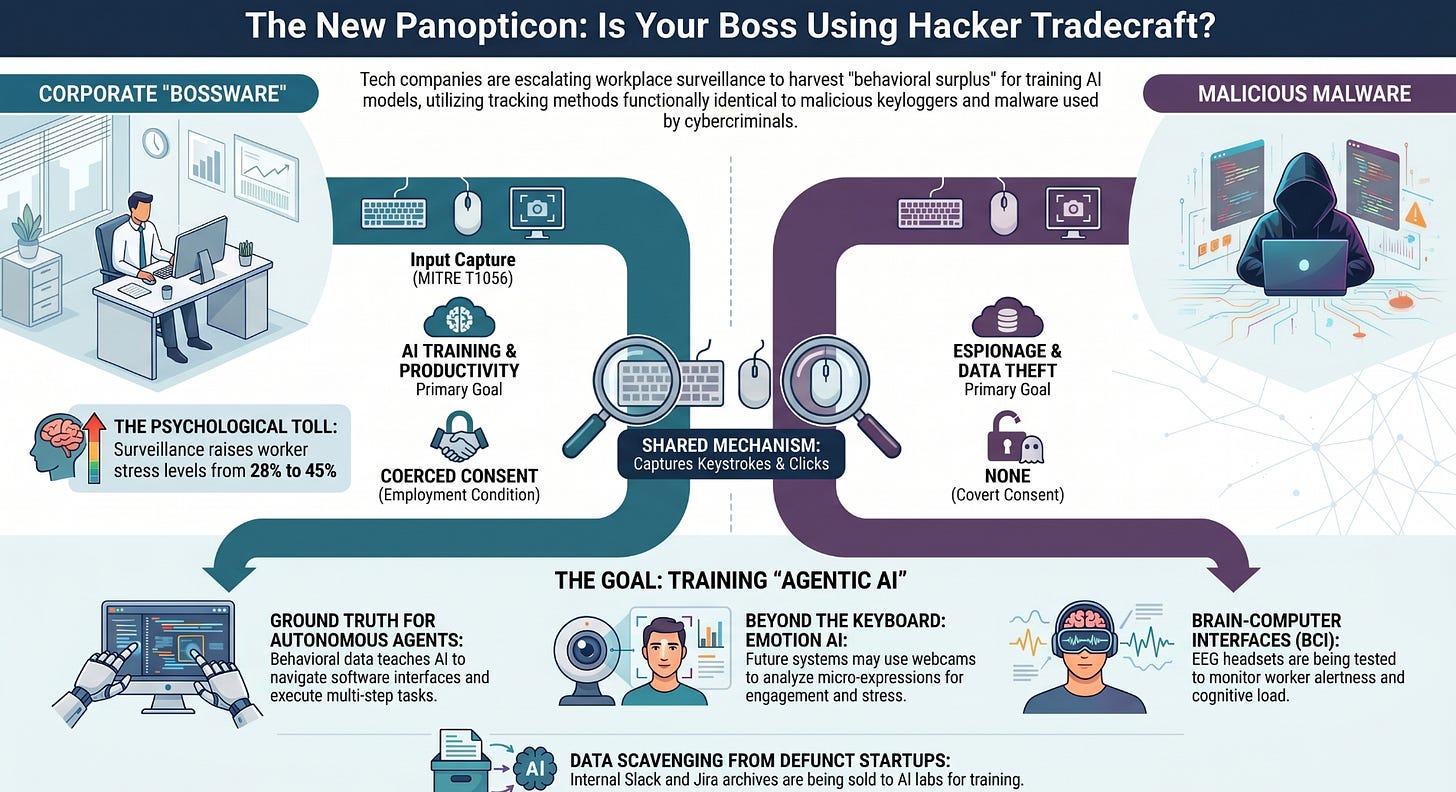

The recent revelation that Meta is deploying software to track the mouse movements, clicks, and keystrokes of its employees to train artificial intelligence models has sparked significant backlash and raised profound questions about workplace privacy. This initiative, dubbed the Model Capability Initiative (MCI), represents a significant escalation in corporate surveillance, blurring the lines between employer oversight, dystopian fiction, and malicious hacker tradecraft.

The Orwellian Parallel

Surveillance Capitalism in the Workplace

The comparison to George Orwell’s 1984 is not merely hyperbolic; it is structurally accurate. In Orwell’s dystopian society, the “telescreen” served as a two-way device that relentlessly broadcast propaganda while simultaneously monitoring individuals’ every move, ensuring total compliance and eliminating the possibility of dissent.

Meta’s MCI functions as a modern, digital telescreen. By recording every keystroke, mouse movement, and taking periodic screenshots of employee workstations, the company achieves a level of granular surveillance that mirrors the totalizing oversight of the Party in 1984. The chilling effect is immediate: employees have expressed that the tracking makes them “super uncomfortable,” recognizing that such pervasive monitoring inherently stifles free expression and creates an environment of constant scrutiny.

This phenomenon is a stark manifestation of what Harvard professor Shoshana Zuboff terms “surveillance capitalism.” Zuboff defines this as the unilateral claiming of private human experience as free raw material for translation into behavioral data. While initially applied to consumer data harvested by tech giants to create “prediction products” for targeted advertising, this logic has now turned inward. Employees’ digital labor and physical interactions with their machines are no longer just the means of production; they are the product itself, the “behavioral surplus” required to train the next generation of AI.

The Hacker Strategy

The methods Meta is employing to gather this data are functionally identical to established hacker tradecraft. In the cybersecurity domain, the practice of recording keystrokes and mouse movements is known as Input Capture, specifically categorized under the MITRE ATT&CK framework as technique T1056.

Keylogging (T1056.001)

The most prevalent form of input capture is keylogging. A keylogger is a type of surveillance technology, often deployed as malware, used to monitor and record each keystroke on a specific device. Adversaries use keyloggers to intercept sensitive information, such as passwords, financial details, and confidential communications.

Advanced Persistent Threats (APTs), sophisticated, well-resourced cyberattack groups often sponsored by nation-states, frequently utilize input capture to maintain long-term access and gather intelligence from compromised networks. The fact that a major technology corporation is deploying the same mechanism on its own workforce highlights a disturbing convergence between corporate management tools and malicious cyber espionage techniques.

The Broader Trend

Meta’s initiative is not an isolated incident but part of a broader, aggressive trend in corporate data collection driven by the insatiable data requirements of AI models. As large language models (LLMs) exhaust publicly available internet data, AI companies are desperately seeking new sources of high-quality, human-generated content.

Liquidating Defunct Startups for Data

One of the most striking examples of this trend is the emerging market for the digital remains of failed companies. Defunct startups are increasingly selling their internal communications, including Slack archives, Jira tickets, and email threads, to AI labs as training data. Companies like SimpleClosure, which assist in shutting down startups, report processing numerous deals where internal workplace data is sold for hundreds of thousands of dollars.

This practice raises severe privacy concerns. Even if attempts are made to anonymize the data, internal communications are inherently rich in personally identifiable information, candid discussions, and proprietary business logic. The transformation of private employee interactions into commodified training data without explicit, informed consent represents a massive breach of trust and a redefinition of workplace privacy.

The Rise of “Bossware” and AI Monitoring

The use of employee monitoring software, often termed “bossware,” has skyrocketed, particularly following the shift to remote work. Recent statistics indicate that 74% of US employers now use online tracking tools, and 61% utilize AI-powered analytics to measure employee productivity or behavior.

This surveillance takes a significant toll on workers. Employees in high-surveillance environments report stress levels of 45%, compared to 28% in low-surveillance settings, with 59% reporting stress or anxiety directly caused by workplace monitoring.

The Value of Behavioral Data for AI

Current AI models are highly capable of generating text and answering questions, but they struggle with executing complex, multi-step tasks within software environments. They lack the contextual understanding of how humans actually navigate interfaces, use keyboard shortcuts, switch between applications, and correct errors in real-time.

Behavioral data, the exact sequence of keystrokes, mouse movements, and clicks, provides the “ground truth” necessary to train AI agents to perform these actions autonomously. As Meta CTO Andrew Bosworth noted, the goal is to build agents that “primarily do the work,” requiring models to learn from “real examples of how people actually use [computers]”. "We are training our replacements, with or without our consent."

In the contact center industry, for example, while AI can transcribe calls and suggest responses, it cannot yet replicate the complex system navigation an experienced human agent performs to resolve a customer issue. By harvesting this behavioral layer, companies aim to bridge the gap between conversational AI and autonomous AI agents capable of replacing human labor in knowledge work.

Future Trajectories: Beyond the Keyboard

The current focus on keystrokes and mouse movements is likely only the beginning. As the demand for behavioral and emotional data grows, corporate surveillance is poised to become even more invasive, incorporating advanced biometric and physiological monitoring.

Emerging technologies and future trends include:

•Emotion AI and Facial Analysis: Systems that use webcams to analyze facial expressions and micro-expressions to determine an employee’s emotional state, engagement level, or stress .

•Advanced Behavioral Biometrics: Moving beyond simple tracking to create unique digital fingerprints based on typing cadence, mouse movement patterns, and interaction speed, used for continuous authentication and behavioral profiling .

•Brain-Computer Interfaces (BCIs) and EEG: While currently niche, the use of electroencephalogram (EEG) headsets to monitor brainwaves for alertness and cognitive load is already being tested in high-risk industries and could eventually migrate to standard office environments.

Conclusion

Meta’s decision to track employee keystrokes and mouse movements for AI training is a watershed moment in corporate surveillance. It perfectly illustrates the mechanics of surveillance capitalism applied to the workforce, utilizing techniques indistinguishable from malicious hacker tradecraft to extract behavioral surplus. As the AI industry’s hunger for data drives the commodification of every digital interaction—from the Slack messages of dead startups to the micro-movements of current employees—the fundamental right to privacy in the workplace is being systematically dismantled. Without robust regulatory intervention and a reassertion of digital labor rights, the future of work risks resembling the totalizing surveillance of 1984, optimized not just for control, but for the automated replacement of the workers themselves.

🧰 AI Tools of The Day

Fraud and Surveillance - Tools to be aware of or used responsibly

BioCatch - Behavioral Biometrics & Fraud Detection - Collects over 3,000 behavioral signals, mouse movements, typing rhythm, swipe patterns, and interaction speed, to build a unique behavioral fingerprint for every user.

Teramind — AI-Powered Employee Monitoring - Captures keystrokes, records screens in real-time, logs application usage, tracks email content, and generates AI-driven productivity scores.

Clearview AI — Facial Recognition at Scale - It has scraped billions of public facial images to build a database used by law enforcement and government agencies to identify individuals from a single photograph.

Scale AI — AI Training Data Pipeline - The company that processes and labels the raw behavioral, image, and text data that AI companies need to train their models. Owned by Meta.

FullStory — Session Replay & Behavioral Analytics -Records every mouse movement, scroll, click, and keystroke on websites and apps, creating a full video replay of user sessions.

The Robot Just Served an Ace, And It’s a Bigger Deal Than You Think

The moment AI moved from the screen into the physical world.

In 1997, IBM’s Deep Blue defeated Garry Kasparov, the world’s greatest chess champion, and the world changed overnight. Not because chess mattered, but because of what it proved: that a machine could master a domain once considered exclusively human.

Last week, we got that moment for physical robotics.

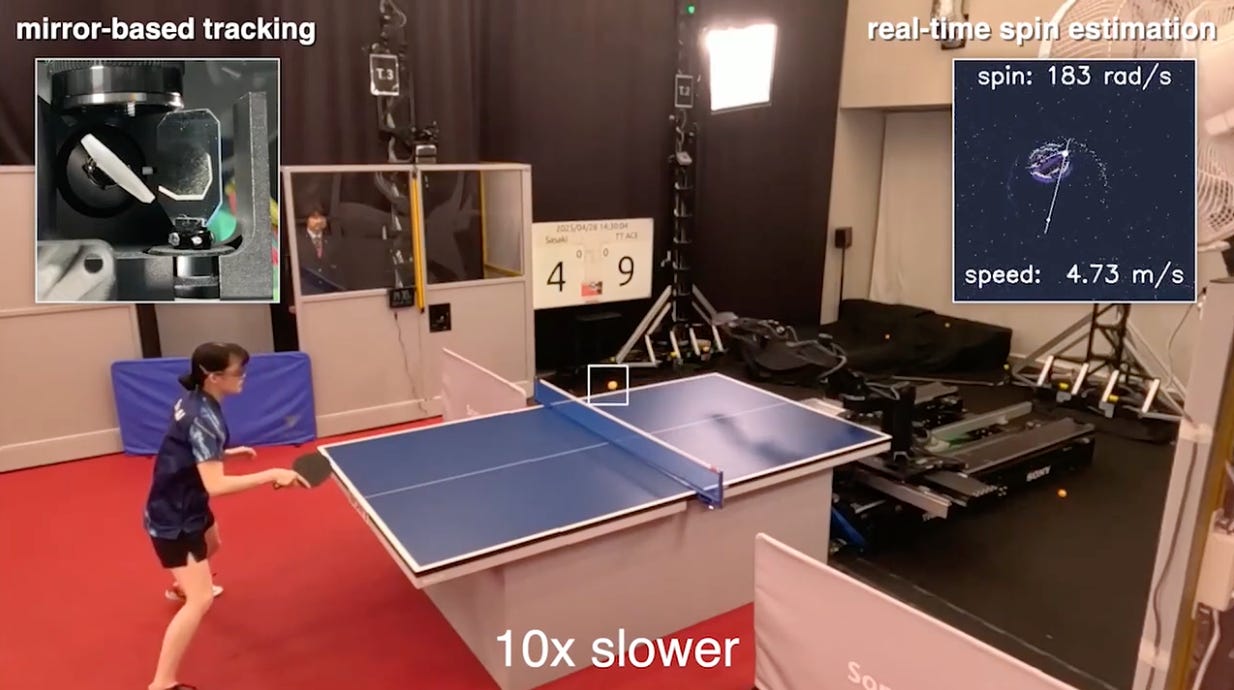

Sony AI published research in Nature introducing Ace, an autonomous robot that just beat elite human table tennis players. Not in simulation. Not with special rules or handicaps. On a regulation Olympic-sized court, under official ITTF rules, with licensed umpires judging every point. Ace won 3 out of 5 matches against elite players.

Why Table Tennis? Why Does This Matter?

Chess is information. Table tennis is physics at the edge of human capability.

The ball travels at over 20 meters per second. Spin can exceed 1,000 radians per second. The time between shots? Often less than half a second. It’s real-time, adversarial, and brutal. To compete, Ace needed to solve three hard problems simultaneously:

See faster than humans - It uses event-based cameras that track spin at 400–700Hz (your eye blinks in ~150ms)

Decide faster than humans - Its RL-trained policy updates every 32 milliseconds

Move faster than humans - A custom 8-joint robot arm hitting balls at up to 16.4 m/s

What makes this the “Deep Blue moment” for robotics is the transfer problem. Deep Blue played in a virtual, perfectly defined world. Ace operates in a messy, noisy, unpredictable reality — with spin, air drag, table bounce variation, and a human opponent actively trying to beat it. That’s an entirely different class of problem.

This Didn’t Happen in Isolation

The same week Ace made headlines, 21 humanoid and bipedal robots completed a half-marathon in Beijing, covering 21 kilometers on the same course as human runners. Some stumbled. Some needed resets. But they finished.

Both events point to the same underlying shift: the sim-to-real gap is closing fast.

For years, robots could do incredible things in controlled lab environments but fell apart in the real world. That gap is now being bridged through better physics simulation, reinforcement learning trained on synthetic data, and hardware built to handle edge cases at scale.

Where Are We in 5 Years?

Compound this trajectory, and the picture gets serious:

2026–2027: Physical AI agents become competitive in narrow, high-speed domains (sports, warehouse logistics, precision manufacturing). Early commercial humanoid deployments at scale (Figure 1X, Tesla Optimus).

2027–2028: Multi-task physical AI. Robots that don’t just do one thing well, but adapt across tasks in the same environment, the shift from “specialized robot” to “general-purpose robot.”

2028–2030: The labor market starts feeling it. Not replacement, augmentation, and redefinition. Roles in manufacturing, fulfillment, elder care, and field service begin to structurally shift. Early investors in robotics infrastructure and AI-physical stack plays will look very smart.

The compounding dynamic here mirrors what we saw in LLMs from 2020–2024: each breakthrough enables the next one faster. Better sensors → better training data → better policies → better hardware → better sensors again.

The Real Takeaway for You

Kinjiro Nakamura, a 1992 Olympian who watched Ace play, said it best:

“No one else would have been able to do that... but the fact that it was possible means that a human could do it too.”

That’s the pattern every time physical limits get broken, by machines or by humans. The ceiling moves. The definition of possible expands.

📚Learning Corner

Data Privacy, Ethics, and Responsible AI Specialization - Addresses the legal, ethical, and technical dimensions of everything discussed today, from AI training data collection and behavioral surveillance to privacy law and responsible AI governance.